Protecting patient data is non-negotiable. If you're using AI phone systems in healthcare, ensuring they meet HIPAA requirements is critical to avoid fines of up to $50,000 per violation and protect patient trust. Here's what you need to know:

- HIPAA compliance is mandatory for systems handling Protected Health Information (PHI). This includes encryption, access controls, and secure data handling.

- Starting January 2025, all safeguards - technical, administrative, and physical - must be fully implemented. No exceptions.

- Key technical requirements include end-to-end encryption (AES-256, TLS 1.2+), multi-factor authentication, and six years of detailed audit logs.

- Administrative safeguards focus on risk assessments, workforce training, and signed Business Associate Agreements (BAAs) that address AI-specific features.

- Physical safeguards ensure secure data center environments with standards like SOC 2 and ISO 27001.

Verification steps:

- Request compliance documentation (e.g., BAA, SOC 2 Type II reports).

- Check encryption protocols and audit logs.

- Test incident response plans and data handling practices.

Common pitfalls include vendors making false compliance claims or lacking critical features like audit logs and role-based access controls. Always verify thoroughly to avoid costly breaches and maintain patient trust.

AI & HIPAA Compliance: What’s Allowed, What’s Risky, and How to Do It Right#

Implementing AI answering for healthcare providers requires a balance between operational efficiency and strict data protection.

sbb-itb-e4bb65c

HIPAA Compliance Requirements for AI Phone Systems#

HIPAA’s emphasis on strong data protection has led to clear guidelines for AI phone systems, divided into technical, administrative, and physical safeguards. Starting January 2025, the Department of Health and Human Services (HHS) requires all safeguards to be mandatory for both Covered Entities and Business Associates. The previous "required vs. addressable" distinction has been removed, meaning healthcare providers must implement every safeguard without exception. Each category plays a vital role in protecting PHI (Protected Health Information).

Technical Safeguards#

Technical safeguards focus on securing PHI through advanced technology. AI phone systems must implement end-to-end encryption for data both at rest and in transit. This includes using AES-256 encryption for stored data and TLS 1.2 or higher for data transmission. These measures ensure that intercepted or stored data remains unreadable.

To prevent misuse of sensitive data, compliant systems must utilize zero-retention endpoints, ensuring patient conversations are neither stored nor used for AI training. Access to PHI should be tightly controlled through multi-factor authentication (MFA), role-based access, and adherence to the principle of least privilege.

Additionally, systems are required to maintain detailed audit logs for a minimum of six years. These logs should include user identification, timestamps, actions performed, and data accessed. Such records are crucial for ensuring accountability and assisting with compliance audits or security investigations.

Administrative Safeguards#

While technical safeguards handle digital security, administrative safeguards focus on managing policies and personnel. Organizations must conduct AI-specific risk assessments and secure a signed Business Associate Agreement (BAA) that explicitly addresses the AI features in use. It’s important to verify that the BAA covers all relevant capabilities, such as voice or text processing.

Workforce training is also a key requirement. Currently, only 18% of healthcare employees are aware of specific policies regarding generative AI use in their organizations. Training programs should start with an overview of HIPAA regulations and progress to hands-on sessions with the AI phone system. Organizations must also establish an AI-specific incident response plan and conduct regular audits, including quarterly access log reviews and annual penetration testing.

Physical Safeguards#

Physical safeguards protect the hardware and infrastructure that store patient data. For cloud-based AI phone systems, this involves using secure platforms like AWS GovCloud, which operates in a FedRAMP High environment, or Virtual Private Cloud (VPC) setups to isolate sensitive data.

Data centers supporting these systems must comply with recognized security standards such as SOC 2 and ISO 27001. These standards enforce strict access controls and ensure a secure environment for physical infrastructure. Together with encryption, these physical protections provide an additional layer of security, even if storage media were compromised.

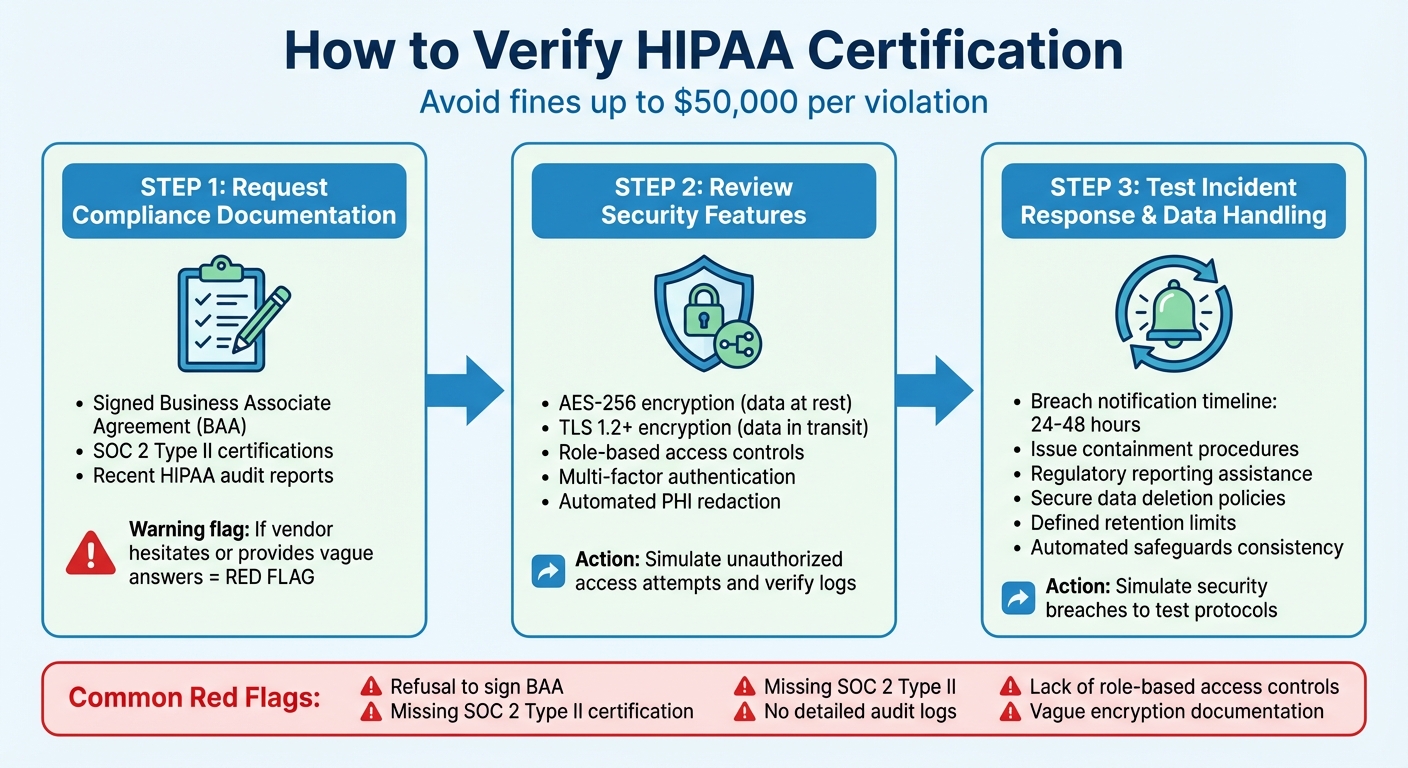

How to Verify HIPAA Certification#

HIPAA Compliance Verification Process for AI Phone Systems

Ensuring HIPAA compliance isn't as simple as taking a vendor's word for it. You need to dig deeper by checking documentation, examining security measures, and testing their response to incidents. Skipping these steps could lead to hefty fines of up to $50,000 per violation. Here's how to properly verify these safeguards.

Requesting Compliance Documentation#

Start by asking the vendor for clear and up-to-date compliance documents. Specifically, request a signed Business Associate Agreement (BAA). This agreement legally binds the vendor to protect protected health information (PHI) and report any breaches. Additionally, ask for SOC 2 Type II certifications and recent HIPAA audit reports to confirm they maintain compliance. Be cautious - if the vendor hesitates or provides vague answers, it could be a red flag.

Reviewing Security Features#

Dive into the technical details to verify security measures. Ask for documentation on key features like AES-256 encryption for data at rest, TLS 1.2 or higher for data in transit, role-based access controls, and multi-factor authentication. To ensure these safeguards are effective, simulate unauthorized access attempts and verify that logs capture all relevant access details. Confirm that automated PHI redaction is functioning as expected. These steps go hand-in-hand with the required technical safeguards, offering an added layer of confidence in their compliance.

Testing Incident Response and Data Handling#

Put the vendor's incident response protocols to the test by simulating security breaches. Check that they notify you within 24–48 hours, contain the issue promptly, and assist with regulatory reporting. Review their formal incident response plan, usually detailed in the BAA, to make sure it aligns with HIPAA standards. Also, assess their data handling practices - ensure they have secure data deletion policies, clearly defined retention limits, and consistently applied automated safeguards. These checks round out the administrative protocols, giving you a full picture of their compliance efforts.

Common Verification Challenges#

When it comes to verifying compliance, even with proper documentation and established security controls, challenges often arise. Misleading compliance claims, in particular, can put your business at serious risk. Some vendors may assert they are HIPAA-compliant but fail to implement the necessary safeguards.

Spotting False Compliance Claims#

One major red flag is a vendor's refusal to sign a Business Associate Agreement (BAA). This legally required document is not optional - without it, a vendor cannot handle Protected Health Information (PHI) in compliance with HIPAA regulations. Beyond the BAA, vendors should provide clear and detailed encryption and audit documentation. Vague or incomplete descriptions often hint at non-compliance.

Another critical area to examine is third-party audit reports. The absence of certifications like SOC 2 Type II or up-to-date HIPAA audit reports is a warning sign. Additionally, systems that lack role-based access controls or fail to maintain detailed audit logs are problematic. These features are essential for tracking who accesses patient information and when, a fundamental HIPAA requirement. Before committing to any vendor, insist on concrete evidence of these safeguards. Non-compliance can lead to penalties as steep as $50,000 per violation. Beyond documentation, ensuring the integrity of AI systems in handling patient data is equally important.

Verifying Conversational Guardrails#

AI systems, particularly those involved in patient interactions, require rigorous safeguards. These systems often manage PHI during tasks like scheduling, prescription refills, or insurance verification. To prevent accidental disclosures, strong conversational guardrails are a must.

For example, the AI should always verify the caller’s identity before sharing PHI, avoid reading sensitive medical history unless absolutely necessary, and never transfer calls to unverified parties without proper authorization. Given the volume of calls in healthcare, manual reviews alone aren’t enough. Automated, scenario-based testing is crucial to ensure these safeguards are functioning correctly.

Healthcare teams often rely on tools like Hamming to conduct these tests. These tools simulate real-world scenarios to evaluate whether the AI consistently prompts for identity verification or refuses to disclose sensitive information without proper confirmation. The testing process combines straightforward pass/fail criteria (e.g., "Did the AI verify the caller's identity?") with more advanced methods to assess how the system handles nuanced situations across various workflows. Without thorough testing, there’s no guarantee that your AI system will meet HIPAA standards or protect patient privacy effectively.

My AI Front Desk's HIPAA Compliance Features#

My AI Front Desk ensures compliance with HIPAA regulations through advanced encryption, user-friendly workflows, and flexible white-label options, making it a reliable choice for healthcare providers and resellers.

Encrypted Call and Data Management#

To safeguard sensitive patient information (PHI), My AI Front Desk employs end-to-end encryption for voice calls and AES-256 encryption for data at rest, including call recordings and transcripts. This layered security aligns with the HIPAA Security Rule's technical safeguards. Secure CRM integration ensures that information access is restricted: front desk staff can view appointment notes, while physicians can access complete records. This approach adheres to the HIPAA Privacy Rule's "minimum necessary" standard.

Post-call, encrypted webhooks securely transmit data to external systems, creating audit trails that are both immutable and essential for compliance reviews. Notifications alert authorized personnel about call content without exposing unnecessary PHI. These features meet HIPAA's audit log requirements and enable rapid breach detection and documentation, typically within 24–48 hours. This proactive approach helps mitigate risks of violations, which can result in fines of up to $50,000 per incident.

Simplified Compliance for Small Businesses#

For smaller practices lacking dedicated IT teams, My AI Front Desk integrates seamlessly with Google Calendar, using TLS 1.2+ encryption for real-time scheduling. The system collects only the minimal PHI necessary for appointments, reducing manual errors while supporting HIPAA's administrative safeguards, such as risk assessments. Multi-language support ensures that non-English calls benefit from the same encryption and access controls, maintaining consistent PHI protection.

Customizable workflows further simplify compliance by ensuring only essential PHI is collected. Built-in features like redaction tools, audit logs, active times control, and auto hangup reduce exposure risks without requiring complex configurations. The platform also includes 200 free minutes per month (approximately 170–250 calls) and integrates with over 9,000 apps via Zapier, enabling automated workflows.

White-Label Solutions for Resellers#

Through its white-label program, My AI Front Desk allows agencies and VoIP providers to offer HIPAA-compliant AI receptionists under their branding. Features like tiered access controls and analytics dashboards help resellers monitor PHI usage patterns without exposing actual data. This setup supports risk assessments and encryption verification. Stripe rebilling simplifies payment processes, enabling flexible billing in just a few clicks.

The analytics dashboard provides detailed insights into call activity and PHI interactions via immutable logs, meeting HIPAA's administrative requirements for ongoing monitoring. Resellers also benefit from 24/7 technical support and options like domain routing or iframe embedding, ensuring compliance while keeping vendor branding invisible. My AI Front Desk signs Business Associate Agreements (BAAs) with healthcare accounts, clearly defining PHI handling responsibilities, breach notification timelines (within 24–48 hours), and secure deletion policies. These agreements extend to resellers, ensuring HIPAA compliance across all applications of the platform.

Conclusion#

Key Points for Small Businesses#

Safeguarding patient data and ensuring compliance with HIPAA regulations is essential for small businesses. To achieve this, it’s crucial to request a signed Business Associate Agreement (BAA), confirm the use of end-to-end encryption (such as AES-256) and TLS 1.2+ protocols, and verify the presence of detailed audit logs. Without these protective measures, your practice could face fines of up to $50,000 per violation and penalties reaching $2.1 million per incident. Additionally, the stakes are high when it comes to patient trust - 61% of patients say they would stop using a healthcare provider after a data breach.

Maintaining compliance isn’t a one-time task. Regular testing and reviews are necessary. For example, scenario-based testing can help ensure the AI system verifies patient identity before sharing Protected Health Information (PHI), blocks unverified call transfers, and keeps accurate audit logs for all workflows. Ongoing risk assessments and staff training are also key to staying compliant as regulations change.

By implementing these measures, businesses not only meet compliance requirements but also gain operational advantages.

Benefits of Trusted AI Solutions#

Taking compliance a step further, solutions like My AI Front Desk simplify the process with features such as encrypted infrastructure, automated audit trails, and role-based access. This eliminates the need for a dedicated IT team. The product is tailored for small businesses, offering 200 free minutes per month (roughly 170–250 calls), unlimited parallel calls, and integrations with over 9,000 apps via Zapier - all while maintaining strict security to safeguard patient data.

Beyond compliance, AI phone systems can deliver tangible benefits. They can reduce phone time by up to 70%, lower no-show rates by 15–20%, and provide 24/7 patient access. One clinic reported saving over $30,000 annually in labor costs after switching to an automated AI receptionist. For resellers, the white-label program simplifies billing and client management, offering tiered access controls and round-the-clock technical support, ensuring secure and efficient service delivery.

Selecting a HIPAA-compliant AI phone system not only shields your practice from legal risks but also improves patient experience and operational performance. My AI Front Desk offers the tools, security, and support to manage sensitive health information with confidence.

FAQs#

What proof should I ask a vendor for to verify HIPAA compliance?#

To confirm HIPAA compliance, ask for specific documentation like SOC 2 Type II certification, details on encryption protocols, audit trails, secure infrastructure measures, and thorough risk assessments. These documents show the vendor's commitment to protecting sensitive data and meeting HIPAA requirements.

Does 'HIPAA-certified' mean anything for AI phone systems?#

Yes, when an AI phone system is described as "HIPAA-certified", it means it incorporates key security features such as encryption, access controls, and compliance protocols. These measures are designed to protect patient data in line with HIPAA regulations, helping to minimize the chances of legal complications or penalties.

How can I test that an AI receptionist won’t disclose PHI to the wrong caller?#

To make sure an AI receptionist doesn’t share protected health information (PHI) with the wrong people, it’s essential to put it through behavioral testing. Set up simulated calls where different identities are used, and check if the AI properly verifies the caller’s identity before providing any sensitive details.

Strengthen security by implementing role-based access controls and multi-factor authentication. Additionally, ensure the AI is trained to follow strict verification protocols, minimizing the risk of unauthorized disclosures. Regular testing and updates are key to identifying and fixing any weaknesses in compliance or authentication procedures.