When it comes to multilingual AI, Claude 3.5 Sonnet and GPT-4 are two leading models, each excelling in different areas:

- Claude prioritizes technical precision and consistent results, making it ideal for tasks like coding, technical documentation, and professional translations.

- GPT-4 delivers fluent, context-aware, and emotionally nuanced translations, excelling in creative tasks, marketing content, and customer support.

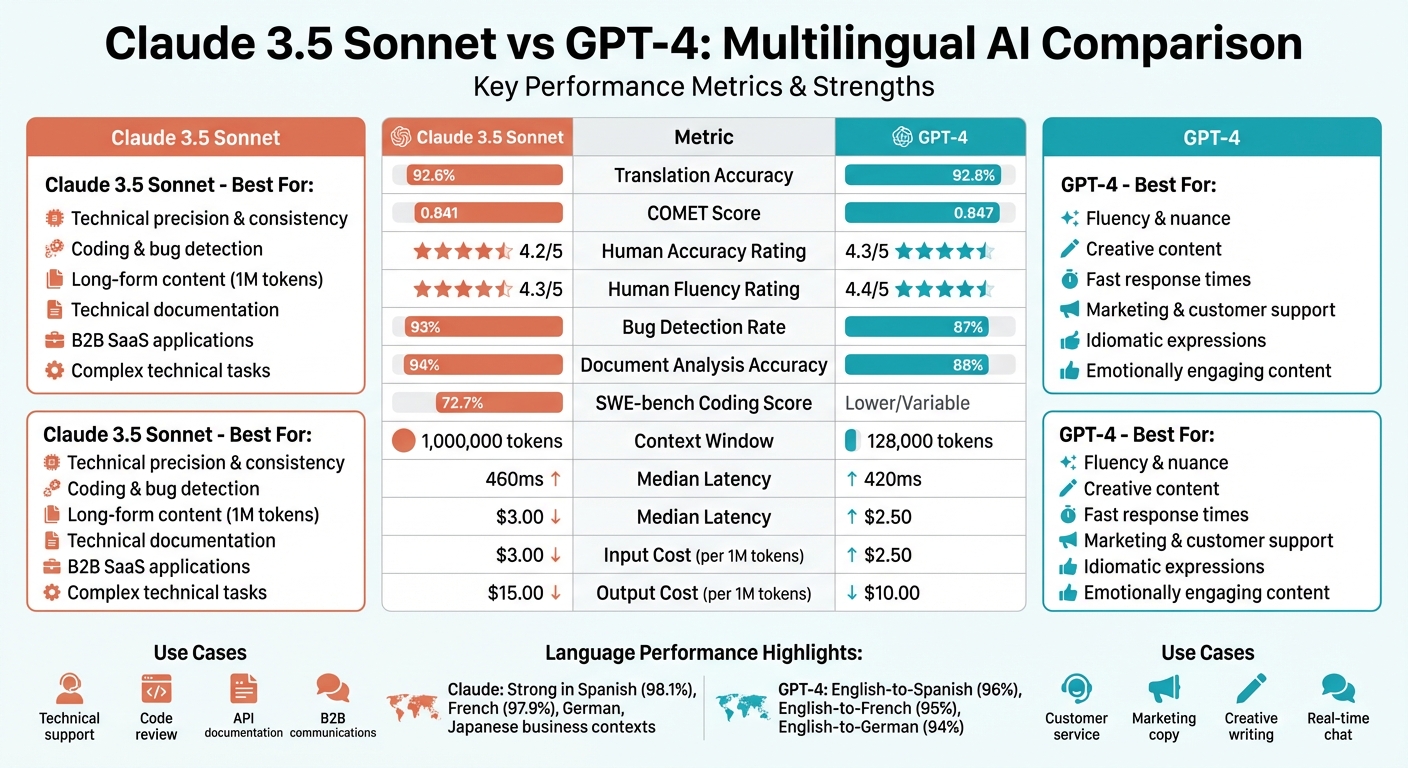

Key Findings:#

- Translation Accuracy: GPT-4 averages 92.8% across major languages; Claude follows closely at 92.6%.

- Strengths:

- Claude: Handles complex technical tasks (e.g., identifying 93% of coding bugs) and long-form content with up to 1 million tokens.

- GPT-4: Excels in nuanced translations, idiomatic language, and fast response times (420ms median latency).

- Cost: GPT-4 is more affordable, with input costs 17% lower and output costs 33% lower than Claude.

- Language Performance:

- Claude: Strong in Spanish, French, German, and Japanese business contexts, but struggles with lower-resource languages like Yoruba.

- GPT-4: Slightly better in high-resource languages but less consistent in morphologically complex ones like Arabic.

Quick Comparison#

| Feature | Claude 3.5 Sonnet | GPT-4 |

|---|---|---|

| Translation Accuracy | 92.6% | 92.8% |

| Strengths | Technical precision | Fluency, nuance |

| Context Window | 1 million tokens | 128,000 tokens |

| Cost (per 1M tokens) | Input: $3.00 | Input: $2.50 |

| Bug Detection Rate | 93% | 87% |

| Speed (Median Latency) | 460ms | 420ms |

Both models are highly effective when used strategically. Claude suits technical and B2B tasks, while GPT-4 is better for creative and customer-facing needs. Combining their strengths can optimize multilingual customer support and translation workflows.

Claude 3.5 Sonnet vs GPT-4 Multilingual AI Comparison Chart

Claude vs GPT-4 for Coding: Real Benchmarks Exposed (2026 Data)#

sbb-itb-e4bb65c

Claude's Multilingual Performance#

Claude demonstrates strong technical precision and consistency across multiple languages. For example, its performance in Spanish reaches 98.1% of its English baseline accuracy, while French achieves 97.9%. Other languages like German, Italian, and Portuguese also perform impressively, each exceeding 97% relative accuracy.

Complex Query Processing#

Claude stands out in handling complex multilingual technical tasks. Unlike some models, it openly acknowledges uncertainty rather than providing potentially misleading information. In a notable comparison, Claude 3.5 Sonnet identified 93% of bugs during production code testing, outperforming GPT-4's 87%. Its large 200,000-token context window enables it to process entire codebases while maintaining cross-references.

"Claude 3.5 Sonnet has become the quality leader for professional work." - AI Tool Briefing Team

The model's ability to engage in "extended thinking" further strengthens its capacity to tackle challenging mathematical or technical problems across languages. For instance, Claude successfully identified a race condition in async Python code and suggested three practical fixes, surpassing GPT-4's less effective solution.

This technical strength ensures Claude consistently delivers accurate results across its key languages. This reliability is essential for businesses implementing multilingual support in phone systems to ensure seamless global communication.

Top Languages for Claude#

Claude's expertise shines in its core language pairs, including Spanish, French, German, Italian, and Portuguese, where translation accuracy scores range between 95% and 96%. In Japanese business contexts, Claude handles polite language and honorifics effectively, making it a top choice for B2B SaaS companies operating in Japan looking to overcome linguistic nuances.

In human evaluations of UI string translations, Claude Sonnet achieved scores of 96% for English-to-French and 95% for English-to-German translations. Its accuracy in Arabic reaches 97.1% relative to English. However, performance drops in lower-resource languages like Yoruba, where accuracy is 80.3%.

GPT-4's Multilingual Performance#

GPT-4 delivers translations that are both fluent and context-aware, earning scores of 4.4/5 for fluency and 4.3/5 for accuracy across ten language pairs. Its COMET score of 0.847 surpasses Claude 3.5's 0.841. The model excels at conveying subtle meanings, handling idiomatic expressions, and adapting to regional language differences - like distinguishing between Latin American and Spain Spanish - ensuring translations fit the context. It also navigates complex linguistic rules, such as pluralization and honorifics, without needing explicit instructions or complex setup.

These strengths highlight GPT-4's ability to balance precision and speed across languages.

Cross-Language Consistency#

GPT-4 demonstrates impressive accuracy in high-resource European languages. For example, a 2026 human evaluation of UI string translations revealed 96% accuracy for English-to-Spanish, 95% for English-to-French, and 94% for English-to-German. The model consistently applies custom glossaries and style guides, ensuring brand-specific terms remain uniform across translations.

However, its performance varies with language complexity. Japanese translations scored 91% accuracy, while Arabic translations reached 88%. For morphologically complex languages, GPT-4 can occasionally produce results that feel less natural compared to human translators. To enhance fluency, providing domain-specific context in the prompt - such as "Translate for a medical app" - helps the model choose the most suitable terminology.

Speed in Multilingual Conversations#

Speed is critical for live, multilingual interactions, and GPT-4o is designed to deliver. It boasts a sub-0.2-second time-to-first-token, ensuring rapid responses in real-time scenarios like customer support. In translation benchmarks, GPT-4 achieves a median latency of 420 ms, outperforming Claude 3.5 Sonnet, which averages 460 ms.

"GPT-4 writes with more… personality. There's an energy to its creative output that Claude lacks." – AI Tool Briefing Team

In customer support, GPT-4o's precision shines with an 88% ticket classification accuracy, significantly reducing false positives compared to Claude 3 Opus's 72%. Its error rate for culturally nuanced content is approximately 3%, far better than GPT-4.1's 12%. This blend of speed and accuracy makes GPT-4o particularly effective for live chat and voice-based multilingual interactions, where quick, accurate responses are key to user satisfaction.

Performance Comparison by Benchmark#

Benchmark Results Table#

Here's how Claude and GPT-4 stack up against each other on standardized tests:

| Metric | Claude 3.5/4 Sonnet | GPT-4 / GPT-4o |

|---|---|---|

| SWE-bench (Coding) | 72.7% | Lower/Variable |

| Bug Detection Rate | 93% | 87% |

| Document Analysis Accuracy | 94% | 88% |

| COMET Score (Translation) | 0.841 | 0.847 |

| Human Accuracy Rating (1-5) | 4.2 | 4.3 |

| Human Fluency Rating (1-5) | 4.3 | 4.4 |

| Context Window | 1,000,000 tokens | 128,000 tokens |

What the Numbers Show#

The data highlights where each model shines and how they differ in practical performance.

Claude 4 Sonnet demonstrates strong technical capabilities, particularly in coding and software development. Its 72.7% score on SWE-bench coding tasks and 93% bug detection rate reflect an edge in handling technical and analytical challenges . The model's ability to process up to 1 million tokens - eight times more than GPT-4o - offers a major advantage for tasks like analyzing entire multilingual codebases in one go.

"Claude 4 Sonnet leads in technical performance with a state-of-the-art 72.7% SWE-bench coding score, superior debugging capabilities, and better long-form analytical content generation." – Multibly

On the other hand, GPT-4 excels in areas requiring nuance and creativity. It slightly outperforms Claude in human ratings for accuracy (4.3/5) and fluency (4.4/5), particularly in handling idiomatic language and culturally sensitive material. Additionally, its quick response time - averaging 0.5 seconds to generate the first token - makes it an excellent choice for real-time multilingual interactions.

In practice, many organizations are combining both models to maximize their strengths. Claude often handles tasks involving technical documentation and code-heavy workflows, while GPT-4 is favored for creative writing, marketing content, and customer support scripts . Both models perform exceptionally well on basic multilingual classification tasks, scoring between 97.5% and 100%, making the decision largely task-dependent rather than purely performance-based. These insights help businesses choose the right tool for specific needs, from detailed technical analysis to emotionally engaging content.

Practical Applications for Multilingual AI#

Customer Support in Multiple Languages#

Different AI models shine in distinct customer support scenarios. GPT-4 is particularly effective in B2C support, where its conversational tone and ability to engage emotionally make it a strong contender. It scores 4.2 out of 5 for handling social nuances, such as Japanese honorifics or navigating formal versus casual language registers.

On the other hand, Claude 3.5 Sonnet is better suited for B2B and technical support. Its standout feature is "intellectual honesty", where it admits uncertainty instead of generating plausible but incorrect answers. This quality fosters trust in professional help desk settings. For businesses juggling high-volume multilingual support, platforms like My AI Front Desk combine the strengths of both models. They route queries to the most suitable AI based on factors like complexity and tone, ensuring efficient and accurate responses.

But customer support is just one area where multilingual AI shines. Translation and localization are equally critical for connecting with global audiences.

Translation and Localization#

While customer support relies on conversational adaptability, localization demands accuracy and cultural sensitivity to resonate with diverse audiences.

GPT-4 stands out in marketing content adaptation, handling context-sensitive terms with ease. For example, it can distinguish between different meanings of a word like "Save", making it particularly effective for localizing user interfaces and customer-facing dashboards.

Meanwhile, Claude excels in maintaining brand consistency across long-form content. Its ability to follow brand glossaries and style guides makes it ideal for technical manuals, API documentation, and detailed support guides that require a consistent professional tone. Both models perform far better than traditional translation tools when addressing regional language variants - like the differences between Latin American and Peninsular Spanish - provided that prompts include specific regional context.

Choosing the Right Model for Your Business#

Matching Models to Language Requirements#

Selecting the best AI model for your business hinges on the type of conversations you handle regularly. For example, if your team manages a technical support desk or answers detailed product inquiries, precision is key. Claude identifies 93% of bugs, outperforming GPT-4's 87% accuracy in these scenarios. This level of detail is especially critical for troubleshooting in languages like German or Japanese.

For high-volume interactions, where speed is more important than deep analysis, GPT-4o shines with response times of 1–3 seconds, compared to Claude's 2–4 seconds. This speed advantage makes a big difference in fast-paced environments. Additionally, GPT-4 scored 4.2 out of 5 in human evaluations for cultural appropriateness, making it ideal for marketing conversations that rely on emotional resonance.

Cost considerations also play a role. GPT-4o is priced at $2.50 per million input tokens and $10.00 per million output tokens, while Claude 4 Sonnet costs $3.00 and $15.00, respectively. For businesses handling thousands of multilingual calls each month, GPT-4o’s 17% lower input costs and 33% lower output costs can add up to significant savings, potentially reducing operating expenses by hundreds of dollars.

These differences in performance and cost are key to building an efficient, multilingual support system tailored to your business.

My AI Front Desk Multilingual Features#

Our platform takes advantage of these performance distinctions to optimize AI model usage for your needs. My AI Front Desk integrates both Claude and GPT-4 via its Premium AI Models feature, intelligently routing calls based on their complexity. For instance, if a Spanish-speaking customer calls to schedule an appointment, the system uses GPT-4o, leveraging its quick response time and conversational ease. On the other hand, when a French-speaking client asks for a detailed explanation of policies or technical specifications, Claude steps in for its superior long-context handling and accuracy.

With Unlimited Parallel Calls, your business can manage interactions in multiple languages simultaneously without any drop in performance. Imagine a dental office in Miami answering English and Spanish calls at the same time, maintaining consistent quality across both. The platform's Analytics Dashboard provides insights into which languages drive better conversions and highlights areas where accuracy might need improvement. Paired with Multi-Language Support and Post-Call Webhooks, the system ensures seamless integration with your CRM, sending conversation data in the caller's native language. This ensures your team has all the context they need for effective follow-ups, without anything getting lost in translation.

Conclusion#

Claude and GPT-4 bring complementary strengths to multilingual conversations, each excelling in distinct areas. GPT-4 shines when it comes to creating emotionally engaging, imaginative content, while Claude stands out for its technical precision and consistency. As previously highlighted, GPT-4 is particularly adept at nuanced, context-sensitive translations, whereas Claude captures technical details that are often critical in professional settings.

"GPT-4 leads in COMET and human evaluation, excelling in nuanced, context-dependent translation" - IntlPull Team

The key lies in strategically combining these two models, assigning tasks to the one best suited for the job. This approach effectively addresses the multilingual AI challenges discussed throughout this article, balancing creative engagement with technical accuracy for practical applications.

My AI Front Desk takes full advantage of these strengths by integrating both models into its Premium AI Models feature. For example, GPT-4 ensures natural, conversational responses to quick customer queries, while Claude’s ability to handle long, detailed contexts makes it perfect for more complex explanations. With Multi-Language Support, the system seamlessly manages non-English interactions, and the Analytics Dashboard provides valuable insights into multilingual performance. Impressively, it maintains over 95% of its English-language accuracy in major languages like Spanish, French, and German.

Whether you're a dental office in Miami juggling English and Spanish calls or a law firm in Los Angeles assisting Mandarin-speaking clients, this dual-model strategy offers professional, reliable support 24/7. It’s a powerful solution for small businesses serving diverse communities, ensuring accuracy and responsiveness at every step.

FAQs#

Which model is better for real-time multilingual customer support?#

When it comes to real-time multilingual customer support, Claude often stands out as the stronger option. It performs well across numerous languages, even less commonly spoken ones, and achieves near-English levels of accuracy in zero-shot tasks. While GPT-4 also supports multiple languages, comparisons indicate that Claude’s consistent reliability makes it a great fit for situations requiring accurate, real-time multilingual responses.

How do I choose between Claude and GPT-4 for my specific languages and use case?#

When deciding between Claude and GPT-4, it's important to weigh their multilingual capabilities. Claude stands out for its reliable accuracy across various languages like Spanish, French, German, Chinese, Japanese, and Korean. It also performs impressively in zero-shot tasks, handling challenges without prior examples. On the other hand, GPT-4 shines in delivering high-quality translations and ensuring culturally nuanced results, making it a strong choice for intricate multilingual projects. The best option will depend on the specific languages and tasks central to your needs.

How can I combine Claude and GPT-4 in one multilingual workflow without losing consistency?#

To get the best results from Claude and GPT-4 in a multilingual workflow, it's all about playing to their strengths while keeping the process seamless. Start by using standardized prompts and consistent evaluation criteria - this ensures outputs are aligned and predictable across both models.

One way to divide tasks is to let one model handle the initial understanding or translation phase. Then, use the other model to refine and polish the output, ensuring it’s accurate and nuanced. Regular testing and fine-tuning in the relevant languages are key to maintaining precision and respecting language-specific subtleties. This approach ensures your workflow stays reliable and adaptable across multiple languages.